Understanding Immortal Objects in Python 3.12: A Deep Dive into Python Internals

A detailed examination of Python 3.12's internal changes featuring the concept of 'immortal' objects, for performance enhancements

Advanced Python programmers are aware that Python's recent releases are rigorously aimed at bettering its performance. Among the various improvements, an intriguing one is 'immortalization', rolled out in Python 3.12. Within the implementation of CPython, certain objects such as None, True, and False act as global singletons, shared across the interpreter instead of creating fresh copies each time. From the programmer’s perspective, these objects are immutable, a notion that was not entirely accurate until Python 3.12. Every new reference to these global singletons prompted the interpreter to increment their reference count, similar to regular Python objects. This resulted in few performance issues, including cache invalidation and potential race conditions (in GIL free world).

To solve these problems, Python came up with the concept of 'immortality'. The method of object immortalization marks these objects as 'immortal,' ensuring their reference count remains unchanged. While this is a very simplistic explanation, there are some interesting details behind it. If you're interested in learning about the performance issues that led to this development and wish to look inside CPython to see its implementation details, read on!

Note: Throughout the article, I am exclusively talking about the CPython implementation of Python. Even if I say Python, I am talking about CPython.

Background: CPython's Reference Counting & Object Representation

Let’s ground our discussion with some essential background on CPython's mechanisms related to the object representation and reference counting.

CPython's Reference Counting for Memory Management

CPython relies on reference counting for managing its runtime memory. Each object, on instantiation, starts with a reference count of one. As the object gets assigned to a new variable, its reference count increases. When a variable goes out of scope, the reference decreases. Finally, when the object’s reference count hits 0, it gets deallocated.

Interested in learning about reference counting internals? Check out my recent article on that topic.

Understanding Object Representation in CPython

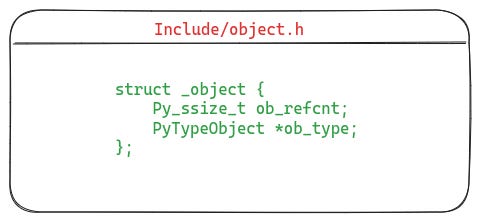

In CPython's implementation, each object’s memory representation begins with a header (defined by the struct PyObject), containing the reference count and the type of the object. The CPython code, by typecasting, treats every object as having type PyObject, in part to ensure a uniform internal API, which accepts and returns objects of type PyObject. This approach simplifies the interpreter code and makes it more easily extendable.

Mutability in Immutable Python Objects

Having understood the reference counting mechanism and the object representation in Python, we are better equipped to understand the curious case of Python's immutable objects like None, True, False, and ““ (the empty string.)

These objects are not born anew every time they are called; instead, they exist as globally shared singleton objects. Irrespective of their immutable status, these objects don't escape Python's standard procedure — they too possess the identical PyObject header as any other Python object. This results in an interesting event: every usage of these objects triggers a mutation as their reference count is updated.

To the end user, these objects seem unchanged. However, this subtle internal shift draws several performance issues which prompted the need for a solution. The subsequent section discusses these challenges and how immortalization addresses them.

Why Immortalization? The Performance Impact

The update of reference counts of immutable objects impacted the performance of Python applications in subtle ways. It was felt especially by large projects, such as Instagram. The PEP-683 describes following reasons to motivate the implementation of object immortalization.

CPU Cache Invalidation

In order to improve the performance, the CPU likes to cache the data it is working with, in the hope that if the code references the same data again, the CPU can load it faster. However, if the data gets modified, the cache gets invalidated, and the CPU needs to fetch the data from main memory next time.

In case of immutable objects, this becomes a problem. Even though their actual data is constant, their reference count gets updated, thus invalidating their cache entries. If immutable objects could be prevented from getting their reference counts changed, it would bring some amount of performance improvement.

In addition to that, in a multi-threaded application, if multiple threads (running on different cores) are simultaneously accessing the same object, they would end up invalidating each other’s caches because of reference count updates, causing even severe performance regression.

Data Races

The Python interpreter uses the Global Interpreter Lock (GIL) as a way to implement synchronization in the interpreter when multiple threads are running. The GIL basically allows only one thread to be ever running within the interpreter, thus making Python not truly concurrent.

However, Python is heading towards a world where the scope of GIL is being reduced. For example, the per-interpreter GIL (see PEP-684) work changes the scope of the GIL, so that each sub-interpreter has its own GIL. And, there is ongoing work as part of PEP-703 to remove the GIL. This opens up opportunities for true concurrency in Python. Having globally shared objects which are constantly mutated was a huge roadblock for projects trying to remove the GIL.

Impact on Copy-on-Write Applications

Lastly, there was a huge performance impact on applications using Copy-on-Write (CoW). For instance, Instagram runs a Django-based service for serving its frontend. They use a pre-forking web server architecture for scaling their application. In this scheme, the server forks multiple processes upon its initialization, and incoming client requests are delegated to one of the child processes by the server.

In a fork based process creation model, when the parent process creates a new child process, the child process receives a copy of the parent’s memory space. Nonetheless, most operating systems utilize a CoW model to expedite process creation. In CoW, the child process shares the parent process’ memory pages in read-only mode. This action not only saves the CPU from duplicating the memory pages but also boosts memory efficiency. If either of the processes tries to write to a shared page, the operating system creates a copy of that page for the child process.

Instagram found that, even though they mostly used immutable objects, copy-on-write was still triggered, leading to rising memory usage over time. Truly immutable objects would rectify this problem plaguing applications using CoW.

These performance penalties clearly highlight the need for immortalization. Next, let’s see how it's been implemented.

Inside Python Immortalization: Implementation Details

Python 3.12 introduced the concept of immortal objects to address the performance and concurrency issues discussed above. An object marked as immortal lasts through the interpreter's lifetime, and its reference count never updates.

Although the idea of an immortal object seems simple, it wasn't easy to implement. Python developers had to ensure that their solution was ABI and API compatible with older CPython versions while not introducing new performance issues. Now, let’s dive deeper into its implementation.

How does CPython Represent Immortal Objects?

Each object in the CPython implementation has a header represented by the struct PyObject (shown in the listing below). This header contains the reference count of the object and its type details.

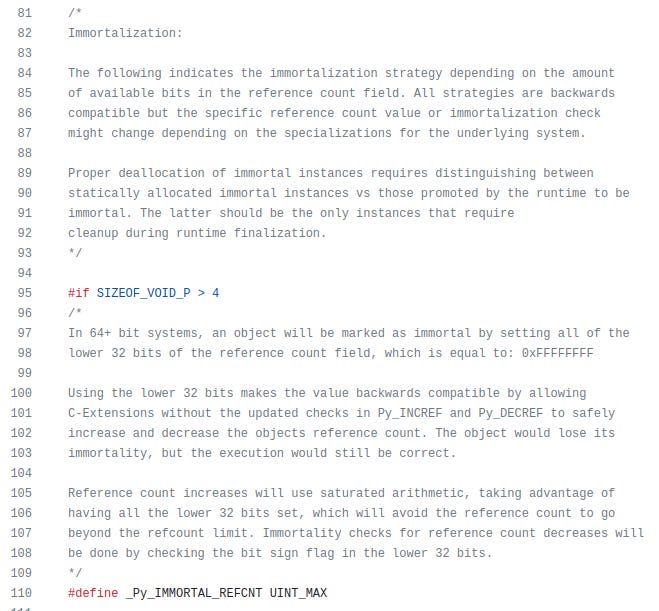

In order to mark an object as immortal, its reference count is set to a special value. In case of 64 bit systems, this is done by setting the low 32 bits of the reference count field, which effectively sets the reference count of immortal objects as the magic number 4294967295. However, as this is an implementation detail, it may change in the future. The following comment in the Include/object.h file explains this in detail.

The last line of the above screenshot also shows the definition of the constant

_Py_IMMORTAL_REFCNTwhich is the magic number used to represent the reference count of immortal objects on 64-bit hardware.

Managing Reference Count Updates for Immortal Objects

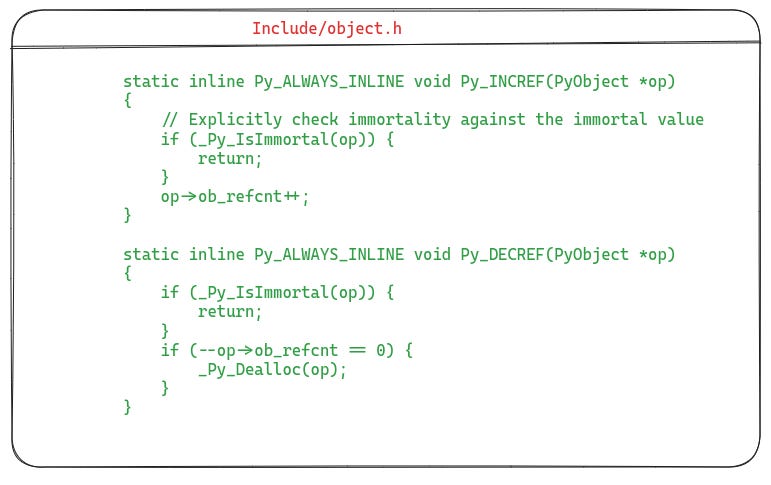

We know that the reference counts are never changed for immortal objects. But, how is this ensured? To keep things uniform and simple, the CPython code implements the functions Py_INCREF and Py_DECREF which are called whenever the reference count of an object needs to be incremented or decremented. The magic lies in these functions. The following listing shows a simplified version of their definitions from Include/object.h.

As you can see, both Py_INCREF and Py_DECREF perform a check to see whether the object is immortal or not, to make sure that they do not modify an immortal object.

Examining the Implementation of Some Immortal Objects

Until now, we have seen how an object is marked immortal. Let's examine a couple of examples of immortal object implementations. Specifically, we will explore how the None object and small integers are immortalized.

Immortalization of None Object

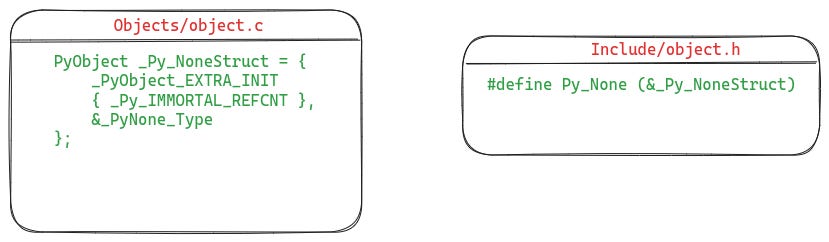

The None object is represented as a statically allocated instance of the PyObject struct. This instance is called _Py_NoneStruct and it is defined in Objects/object.c. In order to access this object, a convenient macro called Py_None is defined in Include/object.h which returns a pointer to the _Py_NoneStruct object. These definitions are shown in the illustration below.

As you can see in the definition of _Py_NoneStruct, its reference count is being set to the magic value _Py_IMMORTAL_REFCNT, which marks it as immortal.

The Process of Immortalizing Small Integers

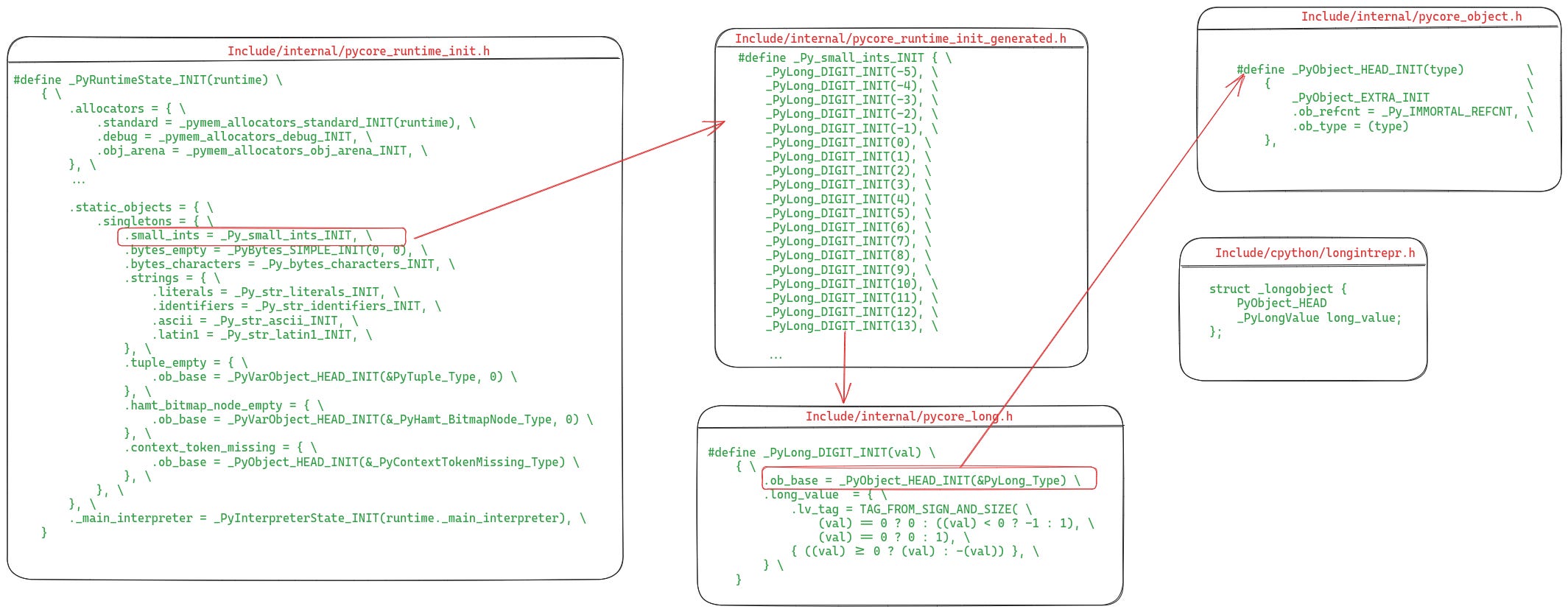

In CPython, small integers in the range -5 (inclusive) to 257 (exclusive) are statically allocated during the initialization of the interpreter. These integer objects are globally shared as singletons, and therefore, they have been made immortal as well. Let’s see how this happens, the following code listing shows the relevant parts.

Let’s break it down:

The macro

_PyRuntimeState_INITis called at the startup of the interpreter where the runtime state of the interpreter is initialized. As part of this, the singleton objects are also created, which includes the initialization of small integers (shown highlighted).These small integers are initialized by the special macro

_PyLong_DIGIT_INIT. This macro creates instances of the_longobjectstruct, which represents the integer object in CPython.The

_PyLong_DIGIT_INITmacro calls_PyObject_HEAD_INITin order to initialize the header of the integer object. As you can see in the definition of_PyObject_HEAD_INIT, it sets theob_refcntfield to the special value_Py_IMMORTAL_REFCNTto mark the object as immortal.

Evaluating the Performance Implications of Immortalization

The objective of immortalization was to rectify performance issues caused by the mutation of immutable objects. However, its implementation introduces some overhead for the CPython interpreter.

As we saw earlier, the Py_INCREF and Py_DECREF functions now include a check for immortal objects. That means, every time they are called an extra check is being made. As these functions are called for updating the reference counts of every single object, the cost of these immortality checks adds up. Nonetheless, Instagram reported in their blog post that clever usage of register allocations reduced this regression’s impact to within two percent.

Wrapping Up

In summary, Python 3.12 introduced immortalization to tackle performance issues caused by modifying the reference count of otherwise immutable objects, such as None, True, and False. These performance challenges included CPU cache line invalidation, copy-on-write, and race conditions in the absence of the GIL (upon its removal). Immortalization resolves these issues by setting the reference count of immutable objects to a special magic number and incorporating checks in the Py_INCREF and Py_DECREF functions to ensure they never touch an immortal object.

Even though immortalization is an internal implementation detail of the CPython interpreter, it shows the complications that exist within the interpreter and how small design decisions can result in performance and concurrency problems down the road. On the bright side, recent Python releases have steadily progressed in fixing many of these performance issues. With recent changes such as the specializing adaptive interpreter and the upcoming GIL removal work, future look promising for Python!